I’ve seen a few guides around the about this – but they seem to mainly focused on using Intune – which has the significant downside of not being able to handle systems which aren’t supported by Intune, such as servers and air-gapped systems.

Now – this is just the way ive been doing it – and its not perfect – but its significantly better than nothing…

I’m going to do something different this article and start with the…

References

Secure Boot Certificate updates: Guidance for IT professionals and organizations – Microsoft Support

Act now: Secure Boot certificates expire in June 2026 – Windows IT Pro Blog

Secure Boot Certificate Changes in 2026: Guidance for RHEL

Secure Boot playbook for certificates expiring in 2026

https://support.hp.com/us-en/document/ish_13070353-13070429-16

https://knowledge.broadcom.com/external/article/423893

What is Secure Boot?

-

Secure Boot is a UEFI firmware security feature designed to ensure that only trusted, digitally signed boot components are allowed to run during system startup.

-

When Secure Boot is enabled:

-

The system firmware verifies the signature of the bootloader

-

The bootloader verifies the next stage (e.g. OS loader / kernel)

-

Anything that is unsigned or signed with an untrusted certificate is blocked

This helps protect against boot-level malware (e.g. rootkits), but it also means systems are dependent on valid signing certificates stored in firmware

Secure Boot certificate expiration

The configuration of certificate authorities (CAs), also referred to as certificates, provided by Microsoft as part of the Secure Boot infrastructure, has remained the same since Windows 8 and Windows Server 2012. These certificates are stored in the Signature Database (DB) and Key Exchange Key (KEK) variables in the firmware. Microsoft has provided the same three certificates across the original equipment manufacturer (OEM) ecosystem to include in the device’s firmware. These certificates support Secure Boot in Windows and are also used by third-party operating systems (OS). The following certificates are provided by Microsoft:

-

Microsoft Corporation KEK CA 2011

-

Microsoft Windows Production PCA 2011

-

Microsoft Corporation UEFI CA 2011

What’s changing?

Current Microsoft Secure Boot certificates (Microsoft Corporation KEK CA 2011, Microsoft Windows Production PCA 2011, Microsoft Corporation UEFI CA 2011) will begin expiring starting in June 2026 and would expire by October 2026.

New 2023 certificates are being rolled out to maintain Secure Boot security and continuity. Devices must update to the 2023 certificates before the 2011 certificates expire or they will be out of security compliance and at risk.

Windows devices manufactured since 2012 may have expiring versions of certificates, which must be updated.

Using SCCM to detect and remediate

- Preparing

- There is no need to run this against systems which are using “Legacy” BIOS mode and not UEFI (Microsoft has unfortunately destroyed the word “legacy” by using it inaccurately for sales purposes – but its is the correct term here)

- Create a new collection folder called something along the lines of “SecureBoot2026”

- Create 2x collections

- Secure.Boot.UEFI.Detected

- select SMS_R_SYSTEM.ResourceID,SMS_R_SYSTEM.ResourceType,SMS_R_SYSTEM.Name,SMS_R_SYSTEM.SMSUniqueIdentifier,SMS_R_SYSTEM.ResourceDomainORWorkgroup,SMS_R_SYSTEM.Client from SMS_R_System inner join SMS_G_System_FIRMWARE on SMS_G_System_FIRMWARE.ResourceID = SMS_R_System.ResourceId where SMS_G_System_FIRMWARE.UEFI = “1”

- Secure.Boot.BIOS.Detected

- select SMS_R_SYSTEM.ResourceID,SMS_R_SYSTEM.ResourceType,SMS_R_SYSTEM.Name,SMS_R_SYSTEM.SMSUniqueIdentifier,SMS_R_SYSTEM.ResourceDomainORWorkgroup,SMS_R_SYSTEM.Client from SMS_R_System inner join SMS_G_System_FIRMWARE on SMS_G_System_FIRMWARE.ResourceId = SMS_R_System.ResourceId where SMS_G_System_FIRMWARE.UEFI = “0”

- Configuration baseline 1 – Detect secure boot

- Create a new configuration item

- Name : Secure Boot – Check Enabled

- Target OS : All

- New setting

-

- Remediation script : None

- Compliance rule :

- Name : Secure Boot – Check Enabled Result

- The value returned by the specified script = True

- Create a baseline

- Name : Secure Boot Enabled Baseline

- Add the configuration item we just created

- Tick “Always apply this baseline even for co-managed clients”

- Deploy the baseline to the “Secure.Boot.UEFI.Detected” collection

This will run against devices that we know are running in UEFI mode and detect if they have secure boot enabled. (Note that the “Confirm-SecureBootUEFI” powershell command only exists if a machine has a recent CU level….. patching machines is a large rabbit hole to go down – but… if you havent patched your machines in the last 3 months – you have larger issues than Secure Boot – address that first)

- From the deployment of the “Secure Boot Enabled Baseline”

- right click and go to “create new collection”

- Create one for “Compliant” called “CB-SecureBootEnabled”

- Create another for “Non-compliant” called “CB-SecureBootNotDetected”

- Note : i go with “NotDetected” because there may be a patching issue on some machines – you can check this very easily by adding and looking at the OS build version column in SCCM

- Move these collections from the root (where SCCM puts them) into your “SecureBoot” folder

- Configuration baseline 2 – Detect if the update process has completed

- Create a new configuration item

- Name : Secure Boot Update Completed

- Target OS : All

- New setting

-

- Remediation script : None

- Compliance rule :

- Name : Secure Boot Update Completed Result

- The value returned by the specified script = Updated

- Create a baseline

- Name : Secure Boot Update Completed Baseline

- Add the configuration item we just created

- Tick “Always apply this baseline even for co-managed clients”

- Deploy the baseline to the “CB-SecureBootEnabled” collection

This will run against devices that we know have secure boot enabled.

- From the deployment of the “Secure Boot Completed Baseline”

- right click and go to “create new collection”

- Create one for “Compliant” called “CB-SecureBootUpdateComplete”

- Create another for “Non-compliant” called “CB-SecureBootUpdateIncomplete”

- Move these collections from the root (where SCCM puts them) into your “SecureBoot” folder

You now have the structure that will provide you up-to-date information on where you are at.

Deployment of the secure boot update

- There are a number of methods you can use here – i prefer an SCCM task sequence – as it allows me to perform other steps, which i will explain below

- Create a new blank task sequence with a name such as “SecureBootUpdate2026”

- First task – Disable bitlocker for x reboots

- When first deploying this for our EUC devices, there was an approx 5% hit rate of devices that prompted for a bitlocker recovery key. Disabling bitlocker first will address that. I went for 4 reboots – but do whats best for you and your enviornment.

- Second task – set the secure boot regkey

- Command line : reg add HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Control\Secureboot /v AvailableUpdates /t REG_DWORD /d 0x5944 /f

- Third task – force the secure boot scheduled task to run

- Powershell : Start-ScheduledTask -TaskName “\Microsoft\Windows\PI\Secure-Boot-Update”

Now – if, for whatever reason, you have not started this process at all yet (yikes!) – create a new collection and place a small number of machines in there first in order to test this process…. do not immedaitely deploy it to everything that is secure boot enabled. Once you become more confident with the process, place more machines into that collection.

You could also choose to do your firmware updates as part of this task sequence… but i already have a process in place for this – and to me, it makes sense to keep them seperate…. as i want to continue to do firmware updates… but the secure boot update wont be required again in my working lifetime.

Event ID’s you will become familiar with

These are all in the system event log

- Event ID 1801 – you will see this event every time the secure boot update scheduled task runs – giving you a status. Some of the status’s you may see will be (they will be different based on the OS and hardware)

-

✅ 0x5944 → 0x5904 – Windows UEFI CA 2023 applied

-

⏳ 0x5904 → 0x5104 – Microsoft Option ROM UEFI CA 2023 (if needed)

-

⏳ 0x5104 → 0x4104 – Microsoft UEFI CA 2023 (if needed)

-

⏳ 0x4104 → 0x4100 – KEK applied

-

⏳ 0x4100 → 0x4000 – Boot manager updated

- Event ID 1799 – you will often see this on VM’s where the process has all-but completed. Windows has updated, but it cannot update the hyper-V or VMWare firmware file.

- Event ID 1808 – All done. This is the one we want to see.

The (many) wrinkles in this process

To say that this has been poorly managed by MS is an understatement.

Wrinkle 1 – Windows updates

In order to update, the device must be running a supported OS version and have Windows updates from a relatviely recent timeframe. Its different for each OS – because the process is not complex enough already i assume. In order to make life easy, i just say “March 2026 CU”. If the “Confirm-SecureBootUEFI” commandlet is not present, then you need to update.

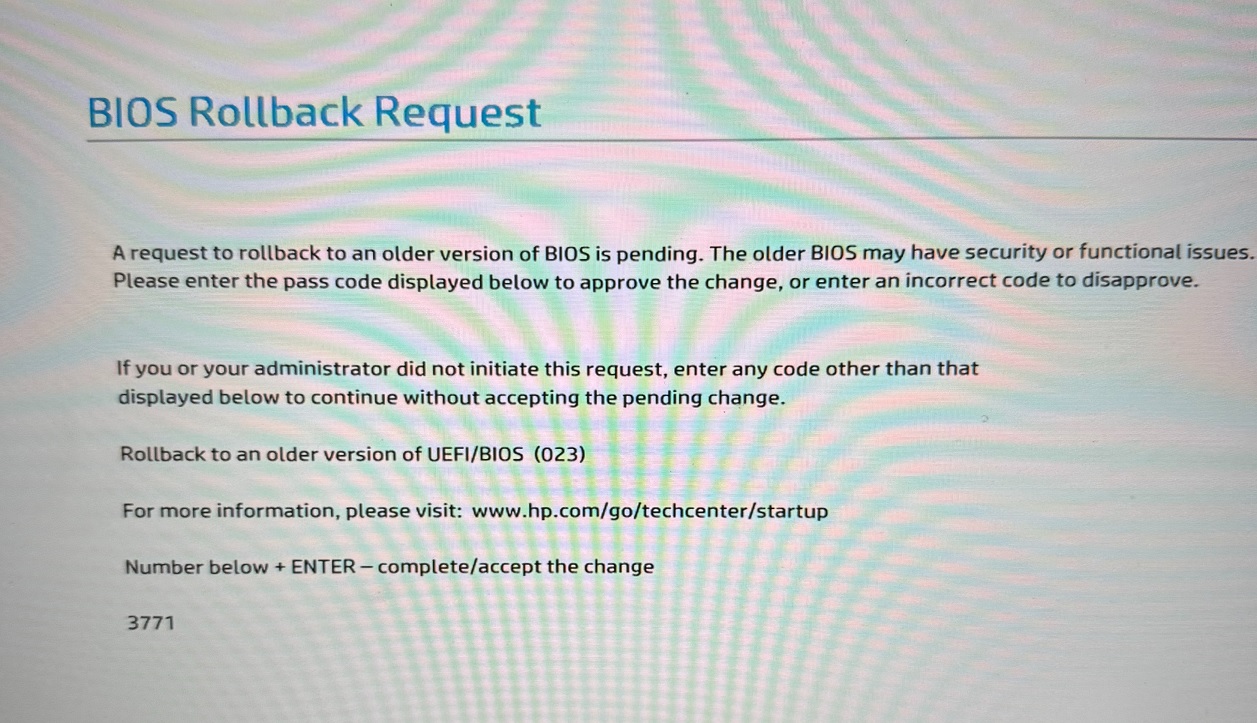

Wrinkle 2 – UEFI updates (which most of us still call BIOS updates)

The UEFI firmware on the device must support this process. The org im with now is all HP – so i used

https://support.hp.com/us-en/document/ish_13070353-13070429-16 to determine which models could not be updated – and which ones could (and what version was needed)

Now – for reasons i dont know, it looks like that page has gone offline – not sure if its temporary or what… but the short answer is that your UEFI version must support this process. I used HPIA to update the versions fleet wide – which seemed to work well.

Wrinkle 3 – Hyper-V

Hyper-V will commonly get to eventID 1799 but not 1808 because it cannot update the firmware file. A helpful commenter in the secure boot playbook (referenced at the start of this post) found that if you toggle the firmware setting – it then updates. Script to do this en-masse at the end

Wrinkle 4 – VMWare

Similar to hyper-V, the nvram file cannot be updated. Once the status gets to “Microsoft UEFI CA 2023”, you can rename the NVRam file (allowing a new one to be created), boot and then reboot again, and you will get 1808.

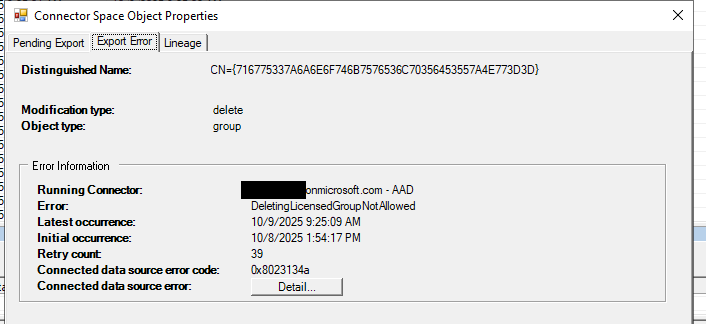

Update 01/05/2026 – VMWare have made large updates to their guidance, and it looks like things will be improved in coming patches – https://knowledge.broadcom.com/external/article/423893

Wrinkle 5 – Inconsistency

Even if you have your Windows updates and UEFI firmware up to date and sorted – the process doesnt seem to be completely consistent.

Set the reg key, run the scheduled task, notice that the machine is in the state “KEK applied”, reboot, run the scheduled task again, get to 1808 – completed

Move onto the next machine and it will require an extra reboot for some reason.

The next machine will get stuck on “KEK applied” for 2-3 reboots for some reason

A small % of our Hyper-V servers seemed to move to 1808 without the need to toggle the boot file – even though they appear to be identical to all the other Hyper-V servers.

Its just… inconsistent…. its gets there – and im sure there are reasons for it… but when your trying to get this deployed to thousands of machines – it creates stress….. and the fact that there’s no reporting / management tool from MS for this process is just exceedingly shit (in line with the “fuck you – what are you going to do about it?” approach they appear to be taking with customers at the moment)

ToggleVMFirmwareHyperV.ps1 – toggles the hyper-V boot file to allow the completion event 1808 to show up

SecureBootCheck.ps1 – allows you to check and optionally run the scheduled task against a list of computers

Get-SecureBootAudit-SCCM.ps1 – runs against SCCM collections to produce a report of machines current status – can be run against different collections (i i use to get a EUC, Server and Operations status – as these devices are managed by different groups)

Note : the two bottom scripts will sometimes report a “custom state”…. this generally means the UEFI firmware requires an update before the device an write to the firmware.